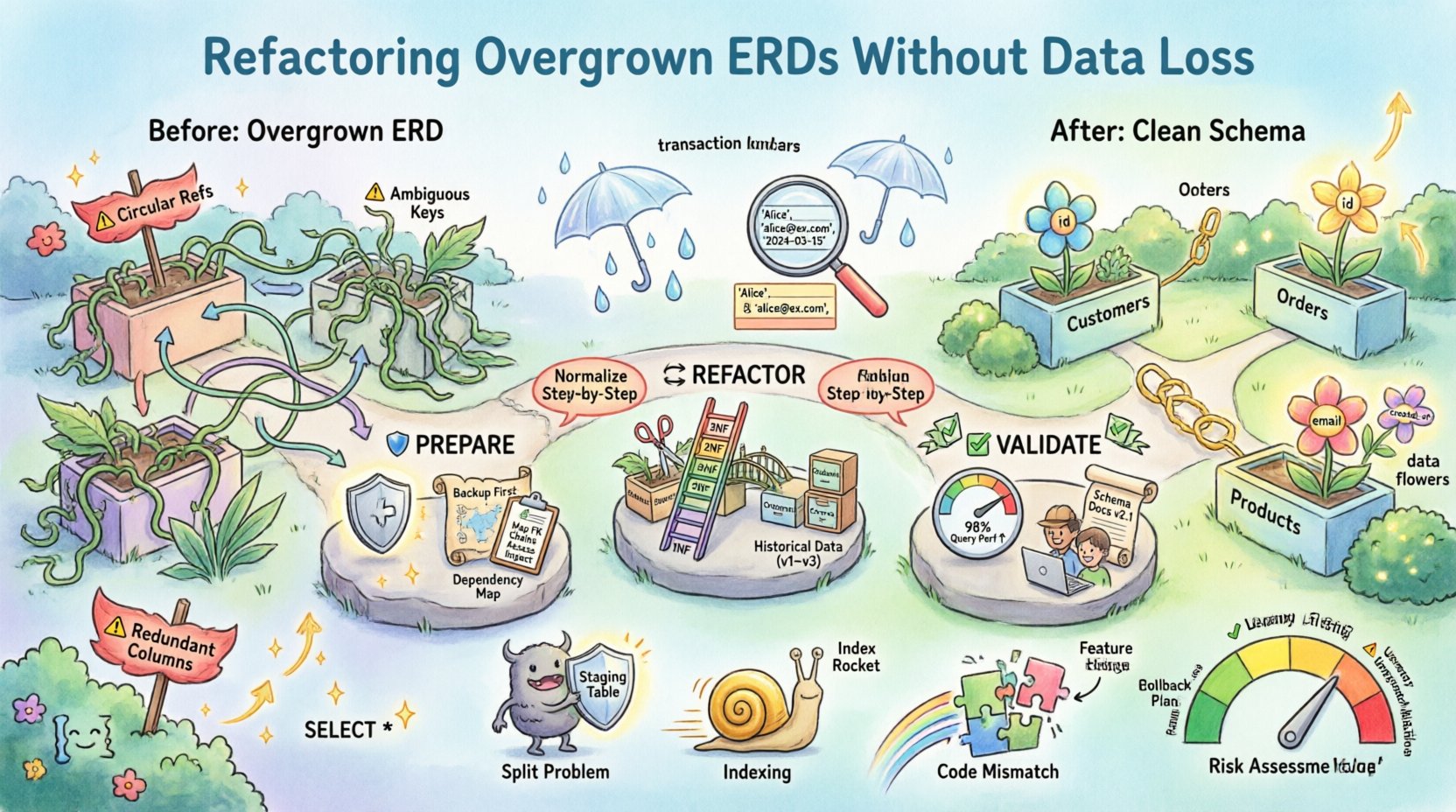

Database schemas are living artifacts. They evolve alongside the business logic they support. Over time, as requirements shift and new features are introduced, the underlying data structure often becomes complex. This complexity manifests visually as an overgrown Entity Relationship Diagram (ERD). An inflated ERD can lead to performance degradation, maintenance nightmares, and an increased risk of data integrity issues.

Refactoring these diagrams is not merely a cosmetic exercise. It is a structural intervention that requires precision. The primary objective is to streamline the schema, improve readability, and optimize query performance while ensuring absolutely no data is lost or corrupted during the transition. This guide provides a structured approach to managing this process.

📉 Why ERDs Become Unmanageable

Understanding the root causes of schema bloat is the first step toward resolution. An ERD that has grown organically without governance often exhibits specific symptoms. Recognizing these patterns allows for targeted intervention.

- Redundant Columns: The same data point is stored in multiple tables. This creates synchronization challenges where updating one instance does not update the other.

- Denormalization Overuse: While denormalization improves read speed, excessive use complicates write operations and increases storage overhead.

- Weak Relationships: Many-to-many relationships are often implemented using single tables with multiple foreign keys, rather than proper junction tables.

- Implicit Business Logic: Data types and constraints may rely on application-level checks rather than database-level enforcement, making the schema fragile.

- Orphaned Entities: Tables exist that are no longer referenced by any active application module but remain in the physical storage.

When these factors accumulate, the ERD becomes a tangled web. Visualizing the relationships becomes difficult, and the risk of introducing errors during any modification increases exponentially.

🛡️ Preparing for Schema Changes

Before touching a single line of DDL (Data Definition Language), a rigorous preparation phase is mandatory. This phase minimizes risk and ensures that a rollback is possible if issues arise.

1. Comprehensive Backup Strategy

Data safety is paramount. A backup is not just a file; it is a verification point.

- Logical Backups: Export schema definitions and data in a human-readable format (such as SQL dumps).

- Physical Snapshots: If the platform supports it, create a point-in-time snapshot of the storage volume.

- Read-Only Replica: If possible, spin up a replica of the production environment. Perform all testing and migration scripts here first.

2. Dependency Mapping

Tables do not exist in isolation. Every entity is referenced by application code, stored procedures, or external reporting tools. You must identify every consumer of the data.

- Review application code for direct table references.

- Check for views or materialized views that depend on specific columns.

- Identify any scheduled jobs or ETL (Extract, Transform, Load) processes that ingest or output data from the affected tables.

3. Impact Analysis

Document the current state. Create a baseline of row counts, data distribution, and query execution times. This baseline allows you to compare the system state before and after refactoring to ensure consistency.

| Checklist Item | Priority | Notes |

|---|---|---|

| Verify Backup Completeness | High | Ensure checksums match source |

| Map All Foreign Keys | High | Document parent-child relationships |

| Identify Active Queries | Medium | Use query logs to find heavy hitters |

| Review Access Controls | Medium | Ensure permissions persist post-migration |

🔄 The Refactoring Methodology

The core of refactoring involves restructuring the logical model. This is often achieved through normalization, though strategic denormalization may be retained for performance. The goal is clarity and integrity.

1. Analyze Current Normalization

Most legacy schemas fall short of Third Normal Form (3NF). Moving toward higher normalization reduces redundancy.

- First Normal Form (1NF): Ensure atomicity. No repeating groups or multi-valued attributes within a single cell.

- Second Normal Form (2NF): Remove partial dependencies. Ensure every non-key attribute is fully dependent on the primary key.

- Third Normal Form (3NF): Remove transitive dependencies. Non-key attributes should depend only on the key, not on other non-key attributes.

| Normalization Level | Key Rule | Benefit |

|---|---|---|

| 1NF | Atomic values only | Eliminates complex parsing logic |

| 2NF | Full dependency on PK | Reduces update anomalies |

| 3NF | No transitive dependencies | Improves data consistency |

2. Decompose Large Entities

When a single table contains too many columns, it often implies distinct business concepts are being conflated. Split these into separate tables.

- Identify groups of columns that describe different entities (e.g., User Profile vs. User Preferences).

- Create a new table for the distinct concept.

- Move the relevant columns to the new table.

- Establish a one-to-one relationship using a Foreign Key.

3. Resolve Many-to-Many Relationships

Directly linking two tables with a column in each is a common anti-pattern. This should be replaced by a junction table.

- Create a new table to act as the bridge.

- Include the Primary Keys from both parent tables as Foreign Keys in the bridge table.

- Add any specific attributes that belong to the relationship itself (e.g., the date a relationship was established).

4. Handle Historical Data

Refactoring often changes how data is stored. Historical records must be preserved accurately.

- Do not simply delete old data. It may be required for audit trails or legal compliance.

- Use migration scripts to transform existing data into the new format before switching the application connection.

- Archive old tables if they are no longer needed but must be retained for record-keeping.

✅ Ensuring Data Integrity

During the transformation, the risk of data corruption is the highest. Integrity constraints are your safety net.

1. Foreign Key Constraints

Enforce referential integrity at the database level. This prevents orphaned records where a child record references a parent that no longer exists.

- Enable

CASCADEupdates or deletes only where logically necessary. - Use

RESTRICTorNO ACTIONto block changes that would break relationships.

2. Transaction Management

Wrap all migration steps in transactions. This ensures that either all changes are applied, or none are. Partial updates lead to inconsistent states.

- Start a transaction before the first DDL command.

- Commit only after all validation checks pass.

- Roll back immediately if an error occurs.

3. Data Validation Scripts

After the migration, run scripts to verify the data.

- Compare row counts between the old and new tables.

- Calculate checksums on critical columns to ensure exact matches.

- Check for null values in columns that were previously not nullable.

- Verify that all unique constraints are satisfied.

⚠️ Common Pitfalls and Solutions

Even with careful planning, issues can arise. Anticipating these problems reduces downtime.

1. The “Split” Problem

When splitting a table, you may encounter duplicate keys. If a composite key is being split, ensure the new keys maintain uniqueness across the new structure.

- Solution: Use temporary staging tables to reorganize data before applying the new schema.

2. Indexing Performance

New relationships require new indexes. Without them, queries on the new junction tables will be slow.

- Solution: Create indexes on Foreign Key columns immediately after creation. Do not rely on the primary key index alone.

3. Application Code Mismatch

The database changes, but the application code does not update immediately. This leads to runtime errors.

- Solution: Implement a feature flag or a dual-write strategy during the transition period. Allow the old and new schemas to coexist briefly.

4. Data Type Mismatches

Refactoring often involves changing data types (e.g., VARCHAR to INT). If the data contains non-numeric characters in a field being converted, the migration will fail.

- Solution: Sanitize the data in a pre-migration step. Create a report of invalid data for manual review.

🚀 Post-Refactoring Validation

The work is not done when the migration script finishes. The system must be validated in a production-like environment.

- Performance Benchmarking: Run the same set of queries used in the baseline check. Compare execution times and resource usage.

- User Acceptance Testing: Have application users perform standard workflows to ensure the data reflects correctly in the UI.

- Monitoring Setup: Enable enhanced logging and monitoring for the specific tables involved. Watch for error spikes or latency increases.

- Documentation Update: Update the ERD diagrams, data dictionaries, and API documentation to reflect the new structure.

📝 Risk Assessment Matrix

| Risk Factor | Impact | Mitigation Strategy |

|---|---|---|

| Unexpected Data Loss | Critical | Verify backups before starting; use transactions |

| Downtime | High | Schedule during maintenance windows; use blue-green deployment |

| Performance Degradation | Medium | Test with production-sized data; optimize indexes |

| Application Breakage | High | Feature flags; gradual rollout |

Refactoring an Entity Relationship Diagram is a disciplined engineering task. It requires a balance between theoretical data modeling principles and practical operational constraints. By following a structured approach, maintaining strict data integrity checks, and preparing thoroughly for the transition, you can modernize your data architecture without compromising the reliability of your information assets.

The complexity of modern systems demands that we remain vigilant. Regular reviews of the ERD should be part of the development lifecycle to prevent overgrowth from becoming a critical issue again. Treat the schema as a critical component of the application’s infrastructure, worthy of the same care and attention as the code itself.

Success in this endeavor is measured by the stability of the system post-migration and the continued accuracy of the data it holds. With patience and precision, the path to a cleaner, more efficient database structure is achievable.