Now Reading: AI Cloud Architecture: The Foundation of Scalable, Intelligent Cloud Applications

-

01

AI Cloud Architecture: The Foundation of Scalable, Intelligent Cloud Applications

AI Cloud Architecture: The Foundation of Scalable, Intelligent Cloud Applications

In the era of artificial intelligence (AI) and machine learning (ML), organizations are no longer just building applications—they are building intelligent systems capable of learning, adapting, and delivering real-time insights. At the heart of this transformation lies AI Cloud Architecture—a powerful, purpose-built framework that enables the development, training, deployment, and management of AI/ML workloads at scale.

This comprehensive guide explores the essence of AI cloud architecture, its core components, strategic use cases, implementation best practices, key concepts, and deployment models—empowering enterprises to harness the full potential of AI in the cloud.

🔷 What Is AI Cloud Architecture?

AI Cloud Architecture is the structural design of cloud-based, scalable infrastructure—comprising computing, storage, and networking resources—specifically optimized to support Artificial Intelligence and Machine Learning workloads. It serves as the backbone for building, training, deploying, and managing AI models efficiently and securely.

✅ Definition:It is the framework—comprising infrastructure, data management, and orchestration—that allows AI/ML models to be built, trained, and deployed at scale.

This architecture leverages specialized hardware such as GPUs (Graphics Processing Units) and TPUs (Tensor Processing Units), integrates robust data pipelines, and employs microservices and container orchestration to deliver intelligent, responsive, and scalable applications.

🧱 Core Layers of AI Cloud Architecture

A well-designed AI cloud architecture consists of five foundational layers:

| Layer | Description |

|---|---|

| 1. Infrastructure Layer | Provides high-performance compute (GPUs/TPUs), scalable networking, and resilient storage. Enables parallel processing for large-scale model training. |

| 2. Data Pipeline Layer | Manages ingestion, preprocessing, transformation, and storage of high-velocity, high-volume data from diverse sources (IoT, databases, APIs). |

| 3. AI/ML Model Layer | Houses the machine learning models—both pre-trained and custom—developed using frameworks like TensorFlow, PyTorch, or scikit-learn. |

| 4. Orchestration & MLOps Layer | Automates the lifecycle of models via CI/CD pipelines, versioning, monitoring, and retraining workflows. Built on platforms like Kubernetes, Argo, or cloud-native MLOps tools. |

| 5. Application & Serving Layer | Delivers AI capabilities through APIs, web services, mobile apps, or edge devices. Supports real-time inference and batch predictions. |

These layers work in harmony to create a seamless pipeline from data to decision-making.

⚙️ Key Components of AI Cloud Architecture

To realize the full power of AI in the cloud, several critical components must be integrated:

-

Kubernetes (K8s): The de facto standard for container orchestration, enabling dynamic scaling and management of AI microservices.

-

Serverless Computing: Ideal for AI inference workloads, allowing automatic scaling and pay-per-use pricing (e.g., AWS Lambda, Azure Functions).

-

High-Performance Storage: SSD-backed block storage and object storage (e.g., S3, Cloud Storage) for fast access to training datasets.

-

Data Lakes & Warehouses: Centralized repositories (e.g., Amazon S3, Snowflake, Delta Lake) that store structured and unstructured data in its raw form.

-

Model Serving Platforms: Tools like TensorFlow Serving, TorchServe, or cloud-managed solutions (e.g., SageMaker Endpoints) for low-latency inference.

-

Monitoring & Observability: Real-time tracking of model performance, drift detection, latency, and system health.

These components ensure resilience, scalability, and operational efficiency across the AI lifecycle.

📌 When to Use AI Cloud Architecture

AI cloud architecture isn’t a one-size-fits-all solution—but it becomes essential under specific conditions:

✅ High-Demand Workloads

When your organization runs resource-intensive AI training jobs—such as large language models (LLMs), computer vision systems, or reinforcement learning agents—you need scalable GPU/TPU clusters that can handle terabytes of data and millions of parameters.

💡 Example: Training a 100B-parameter LLM requires hundreds of GPUs and distributed computing—only feasible with cloud-scale infrastructure.

✅ Real-Time Intelligence

For applications requiring immediate responses, such as fraud detection, autonomous vehicles, or real-time recommendation engines, deploying AI at the edge is crucial.

🌐 Edge AI: Moving inference closer to data sources (e.g., IoT sensors, smartphones) reduces latency and bandwidth usage.

✅ Hybrid/Multi-Cloud Flexibility

Enterprises with strict regulatory requirements or legacy systems benefit from hybrid or multi-cloud strategies, where AI workloads can be flexibly moved between on-premise data centers, public clouds (AWS, Azure, GCP), and private clouds—while maintaining compliance and data sovereignty.

🔐 Use Case: A healthcare provider trains models on-premises (for HIPAA compliance) but deploys inference in the public cloud for scalability.

🛠️ How to Build & Implement AI Cloud Architecture

Implementing AI cloud architecture requires a structured, phased approach. Follow these five steps:

1. Establish a Secure Data Foundation

-

Build data lakes or data warehouses capable of ingesting streaming and batch data.

-

Implement data governance, lineage tracking, and access controls.

-

Use tools like Apache Kafka, AWS Glue, or Google Dataflow for real-time data ingestion.

2. Select the Right Cloud Infrastructure

Choose cloud providers and services tailored for AI:

-

AWS: SageMaker, EC2 GPU instances (P4, G5), S3

-

Azure: Azure ML, GPU VMs, Blob Storage, Databricks

-

GCP: Vertex AI, TPU pods, BigQuery, Cloud Storage

🎯 Tip: Opt for GPU/TPU-optimized instances during training; switch to spot instances or serverless for cost savings during inference.

3. Implement MLOps Practices

Automate the entire AI lifecycle:

-

Version control for data, code, and models (using DVC, MLflow, or Git).

-

CI/CD pipelines for model retraining and deployment.

-

Model monitoring for performance degradation, data drift, and bias.

🔄 MLOps = DevOps for AI — Ensures reproducibility, reliability, and traceability.

4. Optimize for Performance & Cost

-

Use auto-scaling groups to adjust compute based on demand.

-

Leverage spot instances and preemptible VMs for non-critical training jobs.

-

Employ data compression, caching, and tiered storage to reduce costs.

5. Embed Governance & Ethical AI

Integrate security and compliance from day one:

-

Encrypt data at rest and in transit.

-

Implement role-based access control (RBAC).

-

Monitor for model bias, fairness, and explainability (XAI).

-

Ensure adherence to regulations like GDPR, CCPA, HIPAA.

🛡️ Proactive governance prevents costly failures and reputational damage.

🔑 Key Concepts in AI Cloud Architecture

Understanding these foundational concepts is critical for designing effective AI systems:

| Concept | Explanation |

|---|---|

| MLOps (Machine Learning Operations) | A discipline that combines ML, DevOps, and data engineering to automate and streamline the model lifecycle. |

| Data Gravity | The challenge of moving massive datasets across networks. Solution: Place compute near data (e.g., on-premise or regional cloud zones). |

| Model Serving / Inference | The process of deploying a trained model to make predictions. Can be real-time (APIs) or batch (scheduled jobs). |

| Edge AI | Running AI models directly on edge devices (cameras, sensors, phones) to reduce latency and bandwidth. |

| Scalability & Cost Optimization | Using auto-scaling, spot instances, and efficient storage to manage variable workloads and reduce cloud spend. |

These principles guide architects toward resilient, efficient, and future-proof designs.

🌐 Common Deployment Models

Choose the right deployment model based on your business needs:

| Model | Pros | Cons | Best For |

|---|---|---|---|

| Public Cloud | Rapid provisioning, infinite scalability, rich AI services (SageMaker, Vertex AI) | Potential data sovereignty concerns | Startups, innovation teams, scalable AI apps |

| Private Cloud | Full control, enhanced security, compliance | High setup cost, limited scalability | Financial institutions, government agencies |

| Hybrid Cloud | Balances security and flexibility; allows workloads to move between on-prem and cloud | Complex integration | Enterprises with legacy systems and strict compliance needs |

| Multi-Cloud | Avoids vendor lock-in, enables optimal service selection | Increased complexity in management | Large enterprises seeking redundancy and cost efficiency |

🔄 Trend: Most enterprises adopt hybrid/multi-cloud strategies to balance agility, security, and cost.

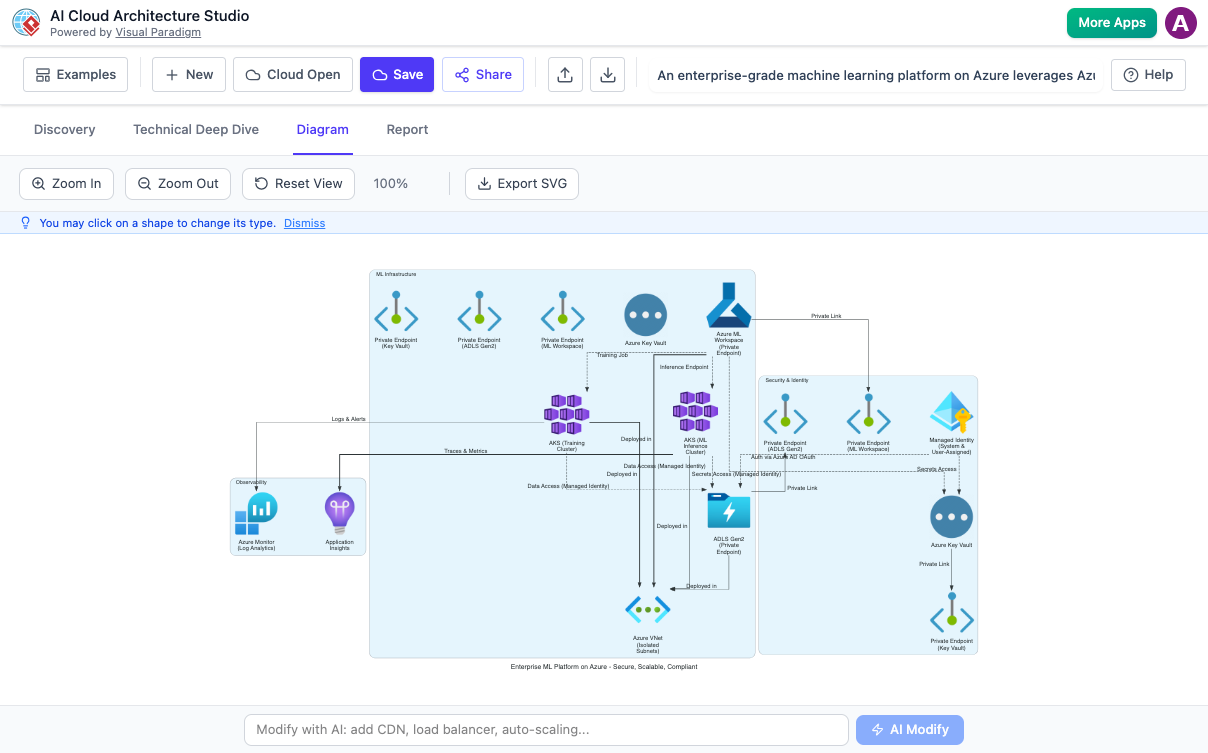

🛠️ Visual Paradigm’s AI Cloud Architecture Studio: A Game-Changer

As AI systems grow in complexity, visual modeling becomes indispensable. Enter Visual Paradigm’s AI Cloud Architecture Studio—a cutting-edge tool designed to simplify and accelerate the design of AI-driven cloud architectures.

🌟 Features & Capabilities:

-

AI-Powered Modeling: Generates architecture diagrams from natural language prompts.

-

Multi-Cloud Support: Designs for AWS, Azure, GCP, and hybrid environments.

-

Integrated MLOps Workflows: Visualizes CI/CD pipelines, model versioning, and monitoring.

-

Real-Time Collaboration: Teams can co-design and annotate architectures in real time.

-

Auto-Documentation: Automatically generates technical documentation, compliance reports, and deployment plans.

📚 Resources:

- AI Cloud Architecture Studio – Visual Paradigm: An official feature overview of Visual Paradigm’s AI Cloud Architecture Studio, detailing its capabilities, multi-cloud support, and integration with AI-driven workflows.

- Revolutionizing Cloud Design: A Deep Dive into Visual Paradigm’s AI Cloud Architecture Studio: A comprehensive analysis of the tool’s AI capabilities, workflow, and real-world applications in enterprise cloud architecture.

- AI Cloud Architecture Studio Launch Announcement: The official release notes from Visual Paradigm, announcing the launch of the tool in February 2026, including key features and initial availability.

- AI Cloud Architecture Studio – Visual Paradigm AI Portal: The dedicated web portal for accessing the AI Cloud Architecture Studio, featuring live demos, tutorials, and user guides.

- AI Cloud Architecture Studio – AI Toolbox by Visual Paradigm: A centralized hub for AI-powered modeling tools, including access to the Cloud Architecture Studio and related AI features.