Designing a robust database structure begins with a precise plan. The Entity Relationship Diagram (ERD) serves as the blueprint for how data will be stored, related, and accessed. However, even experienced architects can introduce subtle errors during the modeling phase. These errors often manifest later as critical data integrity violations. When data integrity fails, the reliability of the entire application is compromised. 🛑

Data integrity refers to the accuracy, consistency, and reliability of data stored within a database. It ensures that information remains unaltered and valid throughout its lifecycle. A well-constructed ERD prevents anomalies such as orphaned records, duplicate entries, and inconsistent values. This guide examines the most frequent modeling oversights that undermine these safeguards. We will explore the technical implications of each mistake and outline how to correct them. 🔍

Understanding Data Integrity in Database Design 🏗️

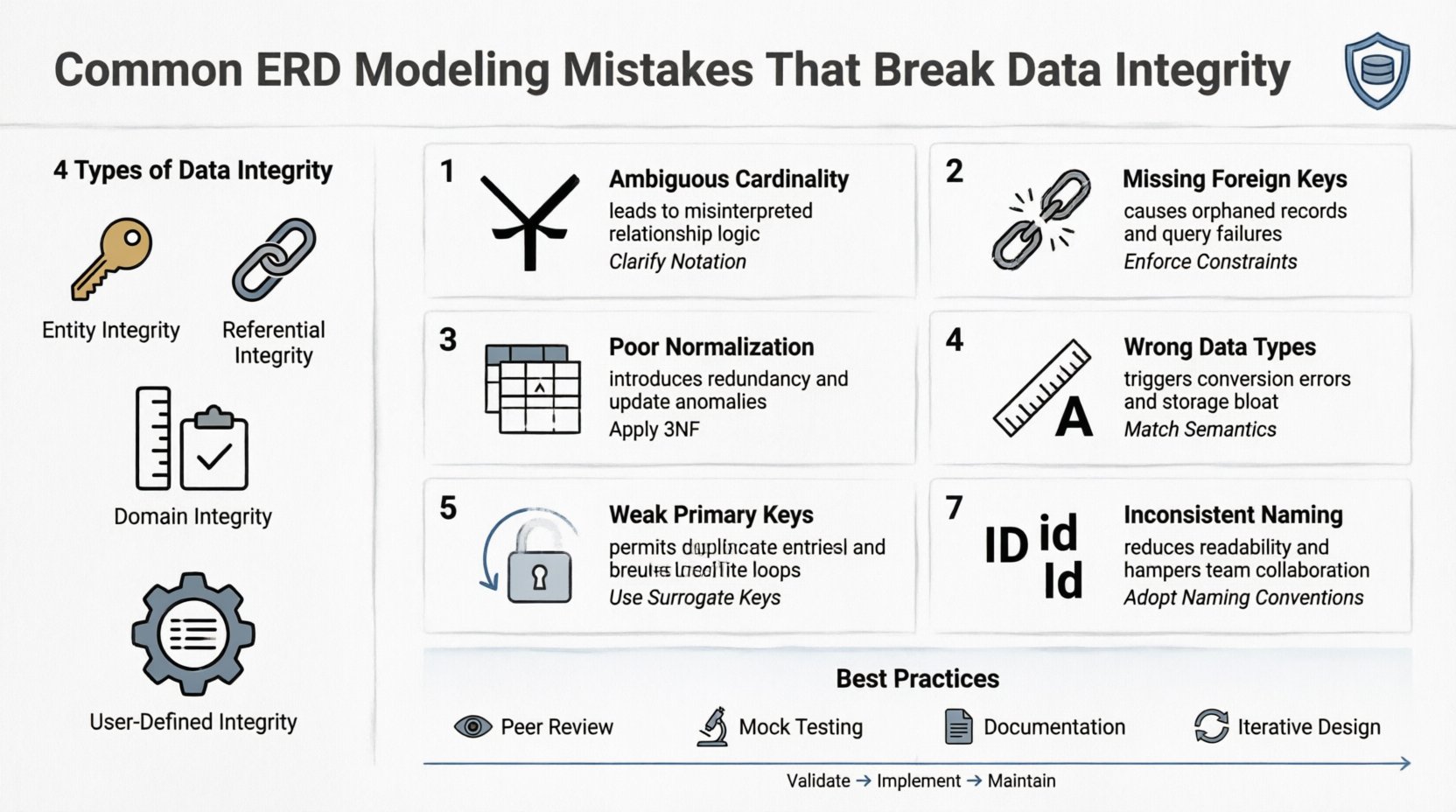

Before diving into specific errors, it is essential to define what integrity means in this context. Data integrity is not merely about preventing crashes; it is about maintaining logical rules. There are four primary types of integrity that an ERD must support:

- Entity Integrity: Ensures every table has a unique primary key. No null values are allowed in the primary key column.

- Referential Integrity: Maintains consistency between tables. A foreign key must match a primary key in the parent table or be null.

- Domain Integrity: Defines valid entries for a specific column, such as data types, length, and range constraints.

- User-Defined Integrity: Business rules specific to the organization, such as age limits or status codes.

When the ERD fails to reflect these rules, the database engine cannot enforce them automatically. This forces developers to write application-level code to check for errors, which is often slower and less reliable. A proper diagram acts as a contract between the data structure and the application logic. 🤝

Mistake 1: Ambiguous Cardinality Relationships 🔄

One of the most common pitfalls involves defining relationships without clear cardinality. Cardinality defines the numerical relationship between entities in a relationship. It specifies whether one instance of an entity relates to one, many, or zero instances of another entity.

The Problem

Modelers often draw a line between two entities without specifying the direction or the count. For example, linking a Customer to an Order without stating if a customer can have multiple orders. If the relationship is treated as one-to-one (1:1) when it should be one-to-many (1:N), data is restricted. Conversely, treating a 1:1 relationship as 1:N introduces redundancy.

The Consequence

- Data Redundancy: If a 1:1 relationship is modeled as 1:N, you may end up storing customer details in multiple order records.

- Update Anomalies: Changing a customer’s address in one record might not update it in another related record.

- Performance Degradation: Join operations become inefficient when the cardinality is not optimized.

The Solution

Always define the relationship explicitly. Use crow’s foot notation to indicate the “many” side. Ensure that every foreign key placement aligns with the intended cardinality. A foreign key belongs on the “many” side of a one-to-many relationship. For many-to-many relationships, a junction table is mandatory. This table breaks the relationship into two one-to-many relationships. 📊

Mistake 2: Ignoring Referential Integrity Constraints 🚫

Referential integrity ensures that relationships between tables remain consistent. It prevents “orphaned records,” which are rows in a child table that reference a non-existent row in the parent table.

The Problem

During modeling, architects sometimes forget to define Foreign Key constraints in the diagram. They might define the relationship visually but omit the constraint logic. This leaves the database open to invalid data entry. For instance, an Order could be placed for a Product ID that does not exist in the Product table.

The Consequence

- Cascading Errors: Deleting a parent record might leave child records without a valid link.

- Query Failures: Join queries may return unexpected results or fail entirely if the link is broken.

- Reporting Errors: Aggregation queries relying on these relationships will produce incorrect totals.

The Solution

Explicitly model foreign keys in the ERD. Indicate the action to take when a parent record is deleted or updated. Common actions include:

- CASCADE: Automatically delete or update child records when the parent is changed.

- SET NULL: Set the foreign key in the child record to null if the parent is deleted.

- RESTRICT: Prevent the deletion of the parent if child records exist.

Choosing the right action depends on the business logic. For example, you might restrict the deletion of a Supplier if active orders exist, but allow it for archived items. 🛡️

Mistake 3: Poor Normalization Practices 📉

Normalization is the process of organizing data to reduce redundancy and improve integrity. It involves dividing large tables into smaller, logically connected ones. Skipping this step or applying it incorrectly is a major source of data corruption.

The Problem

Modelers often create a single “flat” table to store everything. For example, putting customer details inside an order table. While this simplifies initial queries, it violates the principles of normalization. Specifically, it violates the Third Normal Form (3NF). It also risks violating Second Normal Form (2NF) if partial dependencies exist.

The Consequence

- Insertion Anomalies: You cannot add a new customer without an existing order.

- Deletion Anomalies: Deleting an order might accidentally remove the only record of a customer.

- Update Anomalies: If a customer changes their phone number, you must update every order record associated with them.

The Solution

Adhere to standard normalization rules during the design phase:

- First Normal Form (1NF): Ensure atomic values. No repeating groups or lists in a single cell.

- Second Normal Form (2NF): Remove partial dependencies. All non-key attributes must depend on the whole primary key.

- Third Normal Form (3NF): Remove transitive dependencies. Non-key attributes should not depend on other non-key attributes.

While normalization is crucial, consider denormalization only for read-heavy reporting systems where performance outweighs integrity risks. Always document these exceptions clearly in the model. 📝

Mistake 4: Overlooking Attribute Domains and Data Types 📏

Every column in a table has a domain, which is the set of permissible values. This includes the data type (integer, string, date) and specific constraints (length, precision, range).

The Problem

ERDs often show attributes generically. A field might be labeled “Date” without specifying if it includes time. A “Price” field might be modeled as a string instead of a decimal. This ambiguity leads to inconsistent data entry. Users might type “100.00” in one place and “100” in another, causing sorting and calculation errors.

The Consequence

- Calculation Errors: Treating numbers as text prevents mathematical operations.

- Storage Waste: Using a generic string type for dates consumes more space than a native date type.

- Validation Gaps: The database cannot enforce that a “Price” must be greater than zero.

The Solution

Define precise domains for every attribute in the diagram. Specify the exact data type and any length limits. For monetary values, use decimal types with fixed precision. For dates, specify the format (YYYY-MM-DD). Include constraints for mandatory fields and allowed ranges. This ensures that the database engine rejects invalid data at the source. 💰

Mistake 5: Circular References and Recursive Relationships 🌀

Recursive relationships occur when an entity relates to itself. A common example is an Employee table where each employee has a Manager who is also an employee. Modeling this incorrectly can lead to infinite loops or data inconsistency.

The Problem

Designers sometimes create a foreign key without defining the hierarchy limits. If the recursion is not handled, queries can become infinite. Furthermore, if the self-reference allows cycles (e.g., A manages B, B manages C, C manages A), data integrity regarding hierarchy levels is lost.

The Consequence

- Query Timeouts: Recursive queries without depth limits will crash the system.

- Invalid Hierarchies: Circular management chains confuse reporting structures.

- Data Ambiguity: It becomes unclear who the root of the hierarchy is.

The Solution

Define the recursive relationship carefully. Ensure the foreign key is nullable to allow for root nodes (like a CEO). Implement application-level or database-level checks to prevent cycles. Use depth columns or path strings if complex hierarchy traversal is required. Document the maximum depth of the hierarchy in the design specifications. 👤

Mistake 6: Lack of Unique Constraints on Primary Keys 🔑

The primary key is the unique identifier for a record. It is the foundation of entity integrity. If the primary key is not enforced as unique, duplicate records can exist.

The Problem

Some models suggest a surrogate key (like an auto-increment ID) but fail to mark it as the primary key in the diagram. Alternatively, natural keys (like a Social Security Number) are used without a unique constraint. This allows the database to accept duplicate entries for the same logical entity.

The Consequence

- Duplicate Data: The same customer or product appears multiple times.

- Update Confusion: Updates might apply to only one of the duplicate records.

- Join Ambiguity: Queries joining on the key may return multiple rows unexpectedly.

The Solution

Always designate the primary key clearly in the ERD. Mark it with a key icon or specific notation. Ensure the column is defined as NOT NULL. If using a natural key, add a unique constraint to prevent duplicates. For surrogate keys, ensure the generation mechanism is reliable and conflict-free. 🔒

Mistake 7: Inconsistent Naming Conventions 🏷️

While this seems cosmetic, naming conventions directly impact data integrity. Inconsistent names lead to confusion and duplicate entity creation.

The Problem

One table might use user_id, while another uses UserID or userIdentifier. When developers build queries, they might mix these up. They might join on the wrong column or create new columns that duplicate existing data because they didn’t recognize the synonym.

The Consequence

- Integration Failures: Data from different modules cannot be joined correctly.

- Maintenance Burden: Developers spend time deciphering what each column means.

- Schema Drift: Over time, the database structure becomes fragmented and inconsistent.

The Solution

Establish a strict naming standard. Use lowercase with underscores for column names. Use plural nouns for table names (e.g., orders, not order). Ensure that related entities use the same foreign key names. Document these conventions in a data dictionary. This consistency reduces the cognitive load on developers and minimizes errors. 📖

Summary of Common Modeling Errors

| Mistake Category | Primary Risk | Recommended Fix |

|---|---|---|

| Ambiguous Cardinality | Redundancy or Data Restriction | Define 1:1, 1:N, M:N explicitly |

| Missing Foreign Keys | Orphaned Records | Enforce Referential Integrity constraints |

| Poor Normalization | Update/Insert Anomalies | Apply 1NF, 2NF, 3NF rules |

| Incorrect Data Types | Calculation & Validation Errors | Specify precise domains and types |

| Recursive Loops | Query Timeouts | Limit hierarchy depth and check for cycles |

| Weak Primary Keys | Duplicate Records | Enforce Unique + NOT NULL |

| Inconsistent Naming | Integration Failures | Adopt a strict naming standard |

Strategies for Robust ERD Design 🛠️

Preventing these mistakes requires a disciplined approach. It is not enough to simply draw the lines; you must validate the logic. Here are strategies to ensure your models hold up under scrutiny.

- Peer Review: Have another architect review the diagram. Fresh eyes often spot logical gaps that the creator misses.

- Mock Data Testing: Before implementation, populate a test database with sample data. Attempt to violate the rules you designed. See if the system stops you.

- Documentation: Write a data dictionary alongside the ERD. Explain the business rule behind every relationship and constraint.

- Iterative Design: Do not expect the first version to be perfect. Refine the model as business requirements evolve.

Validation Techniques Before Implementation 🧪

Once the ERD is finalized, validation is the next critical step. This process ensures that the design translates correctly into the physical schema.

- Script Generation: Use tools to generate SQL scripts from the diagram. Review the generated script for syntax errors or missing constraints.

- Constraint Verification: Check that every foreign key in the script matches a primary key in the parent table.

- Index Analysis: Ensure that foreign keys and unique constraints are indexed for performance.

- Edge Case Review: Consider null values. Can a mandatory field be null in your design? If not, mark it as NOT NULL explicitly.

This phase catches implementation errors that do not appear in the visual diagram. It bridges the gap between theory and reality. 🔬

Maintaining Schema Over Time 🔄

Database design is not a one-time event. Requirements change, and the schema must evolve without breaking existing data integrity. When modifying the ERD, follow these guidelines.

- Version Control: Keep a history of schema changes. This allows you to revert if a change introduces errors.

- Backward Compatibility: When adding columns, allow them to be nullable initially. Do not break existing queries that do not expect the new data.

- Migration Scripts: Never alter a table directly in production without a migration script. Scripts ensure the change is reproducible and safe.

- Communication: Notify application teams of schema changes. They must update their code to match the new structure.

By treating the ERD as a living document, you ensure that data integrity remains intact throughout the software’s lifecycle. Consistency is the key to long-term reliability. 📈

Handling Legacy Data Migration 🔄

Sometimes, you must migrate data into a new structure that adheres to better integrity rules. This process introduces specific risks.

- Data Cleansing: Before migration, clean the source data. Remove duplicates and fix formatting errors.

- Mapping Validation: Ensure every source field maps to a valid target field with the correct type.

- Constraint Testing: Run the integrity constraints on the migrated data before making it live.

- Rollback Plan: Have a plan to revert to the old system if the migration fails or corrupts data.

Integrity violations are costly to fix after deployment. Preventing them at the modeling stage saves time, money, and user trust. Focus on precision, clarity, and adherence to relational theory. A solid foundation supports all future development. 🏛️