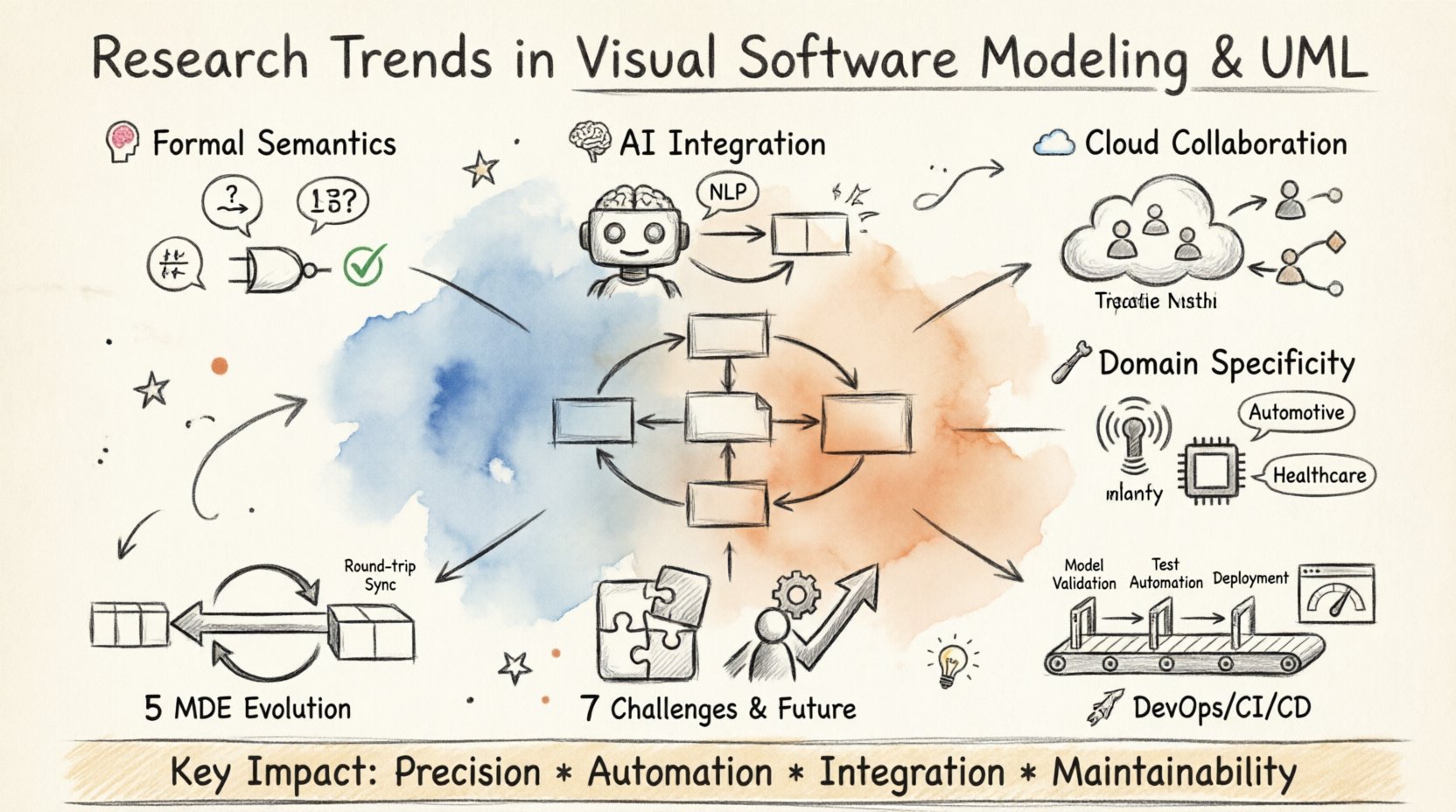

💡 Key Takeaways

- Formal Semantics: Modern modeling increasingly relies on mathematical foundations to ensure correctness and verification.

- AI Integration: Machine learning models are now being used to generate and validate diagrams automatically.

- Collaboration: Cloud-based environments facilitate real-time co-design across distributed engineering teams.

- Domain Specificity: General-purpose notations are evolving to support specialized industry domains like IoT and automotive.

The landscape of software architecture continues to shift. Visual software modeling, particularly through the Unified Modeling Language (UML), remains a cornerstone in system design. However, the tools and methodologies surrounding these diagrams are undergoing significant transformation. This article examines the prevailing research trends shaping how we visualize and validate complex systems today.

The Shift from Syntax to Semantics 🧠

For decades, the primary focus of modeling was syntactic correctness. Ensuring a class diagram adhered to the grammar rules of UML was the baseline requirement. Current research, however, prioritizes semantic precision. The goal is not just drawing a box and an arrow, but defining the exact meaning of that connection.

Researchers are exploring formal methods that overlay UML with mathematical logic. This approach allows for automated reasoning about the model itself. Instead of relying solely on human inspection to find logical flaws, tools can now verify properties such as deadlock freedom or state reachability directly from the visual representation.

This transition addresses a critical gap in traditional engineering: the disconnect between the design diagram and the executable code. By grounding visual elements in formal semantics, the fidelity between the model and the implementation improves significantly.

Model-Driven Engineering (MDE) Evolution 🔄

Model-Driven Engineering has matured from a theoretical concept into a practical workflow for many organizations. The core premise remains: models are not just documentation; they are artifacts that drive code generation. Recent advancements focus on bidirectional transformation.

Traditionally, code generation flowed from model to code. If the code changed, the model often became stale. New research emphasizes round-trip engineering, where changes in the implementation are propagated back to the model. This synchronization ensures the visual representation remains a source of truth throughout the software lifecycle.

The complexity of modern systems requires more than simple boilerplate generation. Research now targets domain-specific code generation that adapts to the architectural style of the project. This allows teams to maintain high-level abstractions while still producing optimized, production-ready artifacts.

Artificial Intelligence and Automated Modeling 🤖

The integration of Artificial Intelligence into modeling tools is perhaps the most visible trend. Natural Language Processing (NLP) allows engineers to describe system requirements in text, which are then converted into diagrams. This lowers the barrier to entry for complex modeling tasks.

Beyond generation, AI is being applied to model improvement. Algorithms analyze existing diagrams to suggest optimizations, identify redundancies, or detect design patterns that were missed. This acts as an intelligent review mechanism, providing feedback that complements human expertise.

Furthermore, predictive analytics are being used to assess the quality of a design before implementation begins. By training models on historical project data, systems can predict potential maintenance costs or failure points based on the structure of the diagram alone.

Collaborative and Cloud-Based Environments ☁️

Software development is increasingly distributed. Remote work and global teams necessitate a shift from local file-based modeling to collaborative cloud platforms. This allows multiple stakeholders to edit and view models simultaneously.

Research in this area focuses on conflict resolution and version control for visual data. Unlike text code, visual elements can overlap in complex ways. New algorithms manage concurrent edits to ensure that changes from different users merge correctly without losing data.

Cloud integration also facilitates better stakeholder communication. Non-technical team members can access simplified views of the system without needing specialized modeling software. This democratizes the understanding of the architecture, aligning business goals with technical execution.

Domain-Specific Languages and Hybrid Approaches 🛠️

General-purpose modeling languages face limitations when applied to highly specialized domains. A diagram that works well for web applications may not capture the nuances of safety-critical automotive systems or IoT networks.

Consequently, there is a strong trend toward Domain-Specific Modeling (DSM). Researchers are developing notations tailored to specific industries. These DSLs inherit the visual clarity of UML but include concepts and constraints relevant to their field.

Hybrid approaches are also gaining traction. These frameworks allow a general-purpose model to be extended with domain-specific annotations. This provides flexibility, enabling teams to use standard notations while embedding specialized metadata where necessary.

Integration with DevOps and CI/CD 🚀

The separation between design and deployment is narrowing. In modern pipelines, models are not static artifacts created at the start of a project. They are integrated into Continuous Integration and Continuous Deployment (CI/CD) workflows.

Automated testing of models is becoming standard practice. Before code is merged, the model undergoes validation checks. If the model violates defined constraints, the pipeline halts. This shifts quality assurance earlier in the process, reducing the cost of fixing defects.

Visualization tools are also being embedded into dashboards. Engineers can see the impact of a deployment on the system architecture in real-time. This feedback loop helps teams understand the consequences of changes as they happen, rather than weeks later.

Challenges and Future Directions 🌐

Despite these advancements, challenges remain. The complexity of models can grow exponentially as systems scale. Managing this complexity without overwhelming the user is a key research focus. Techniques such as abstraction, curation, and dynamic view generation are being refined to handle large-scale architectures.

Interoperability between different modeling tools is another hurdle. Data exchange standards are improving, but seamless integration across the toolchain is still a work in progress. Research continues to standardize metadata exchange formats to ensure portability.

The human factor remains central. Technology cannot replace the intuition and creativity of the architect. The goal of these trends is to augment human capability, not replace it. Tools that reduce cognitive load and highlight critical risks are the most valuable assets in this evolving landscape.

Summary of Impact 📈

The evolution of visual software modeling is moving towards greater precision, automation, and integration. By embracing formal semantics, leveraging AI, and adopting collaborative cloud environments, the industry is building systems that are more robust and easier to maintain. These trends reflect a maturity in how we approach software architecture, treating it as a dynamic, living artifact rather than a static document.